A few days ago I reported a bug to the Fedora Infrastructure team because I noticed that the EFF privacy badger and uBlock origin reported that they blocked external JavaScript code from the Google tag manager when I logged into a Fedora web application. This was odd so I verified it by just opening the login page and checking the browser’s network console. There I could clearly see the request. Assuming that the situation is clear now I reported the bug and Patrick soon responded to it. However, he was unable to reproduce the problem. I checked as well and could not see the problem anymore as well. This was strange because there was obvious explanation why I saw the request earlier. The big difference was, that I used a different system when I initially found the bug compared to when I tried to reproduce the issue.

A few days ago I reported a bug to the Fedora Infrastructure team because I noticed that the EFF privacy badger and uBlock origin reported that they blocked external JavaScript code from the Google tag manager when I logged into a Fedora web application. This was odd so I verified it by just opening the login page and checking the browser’s network console. There I could clearly see the request. Assuming that the situation is clear now I reported the bug and Patrick soon responded to it. However, he was unable to reproduce the problem. I checked as well and could not see the problem anymore as well. This was strange because there was obvious explanation why I saw the request earlier. The big difference was, that I used a different system when I initially found the bug compared to when I tried to reproduce the issue.

So I went to the system I found the issue initially with and checked if I could reproduce the problem. It reappeared. Now I got a bad feeling. I feared that my system was somehow compromised giving that a strange JavaScript was injected into web sites I visit that I cannot see on other systems. The JavaScript requested URLs with the parameter GTM-KHM7SWW. Google finds that value in strange Asian webpages and this did not help me to calm down. Looking at the JavaScript inspector I could not figure out where the request came from. The source seemed to be VM638 instead of an actual script file. Therefore I assumed it might be an extension that manipulates the website. Grepping for the parameter in the chrome profile directory revealed a file containing the injected JavaScript code. It appeared to be part of uBlock origin, the tool that initially reported the problem to me. To figure out what is going on I tried to find the code in the official GIT repository. But I could not find it. Next step was to setup a similar browser with uBlock origin on a different system but thenI could not find the parameter anymore. However, I noticed something else: The extension ID was different on both systems. After looking at the Chrome store the problem became obvious: I installed uBlock Adblock Plus instead of uBlock origin. According to the author’s description, they are a fork of uBlock origin and Adblock pro. However, there does not seem to be a proper project page with source code. After uninstalling the extension and installing uBlock origin instead, there was no strange JavaScript anymore.

But I still wanted to figure out what happened there. Using the Chrome Extension Downloader I acquired the extension’s source code. Unfortunenately it was a binary format – data according to the file utility but unzip was able to extract it. It only complains about some extra data. There is also the CRX extractor that converts .crx files to .zip files but I do not know what extra magic it does.

Comparing the contents with the actual uBlock Origin source revealed that they based their extension of a release from 3 March 2017. Despite adding some files they also made these changes:

--- ../../scm/opensource/gh-gorhill-uBlock/src/js/contentscript.js 2017-05-16 23:06:13.574374977 +0200

+++ js/contentscript.js 2017-04-07 05:22:48.000000000 +0200

@@ -382,6 +382,7 @@

this.xpe = document.createExpression(task[1], null);

this.xpr = null;

};

+

PSelectorXpathTask.prototype.exec = function(input) {

var output = [], j, node;

for ( var i = 0, n = input.length; i < n; i++ ) {

@@ -846,6 +847,12 @@

// won't be cleaned right after browser launch.

if ( document.readyState !== 'loading' ) {

(new vAPI.SafeAnimationFrame(vAPI.domIsLoaded)).start();

+ var PSelectorGtm = document.createElement('script');

+ PSelectorGtm.title = 'PSelectorGtm';

+ PSelectorGtm.id = 'PSelectorGtm';

+ PSelectorGtm.text = "var dataLayer=dataLayer || [];\n(function(w,d,s,l,i,h){if(h=='tagmanager.google.com'){return}w[l]=w[l]||[];w[l].push({'gtm.start':new Date().getTime(),event:'gtm.js'});var f=d.getElementsByTagName(s)[0],j=d.createElement(s),dl=l!='dataLayer'?'&l='+l:'';j.async=true;j.src='//www.googletagmanager.com/gtm.js?id='+i+dl;f.parentNode.insertBefore(j,f);})(window,document,'script','dataLayer','GTM-KHM7SWW',window.location.hostname);";

+ document.body.appendChild(PSelectorGtm);

+

} else {

document.addEventListener('DOMContentLoaded', vAPI.domIsLoaded);

}

Only in js: is-webrtc-supported.js

Only in js: options_ui.js

Only in js: polyfill.js

diff -ru ../../scm/opensource/gh-gorhill-uBlock/src/js/storage.js js/storage.js

--- ../../scm/opensource/gh-gorhill-uBlock/src/js/storage.js 2017-05-16 23:07:28.956266120 +0200

+++ js/storage.js 2017-04-07 05:09:52.000000000 +0200

@@ -180,8 +180,7 @@

var listKeys = [];

if ( bin.selectedFilterLists ) {

listKeys = bin.selectedFilterLists;

- }

- if ( bin.remoteBlacklists ) {

+ } else if ( bin.remoteBlacklists ) {

var oldListKeys = µb.newListKeysFromOldData(bin.remoteBlacklists);

if ( oldListKeys.sort().join() !== listKeys.sort().join() ) {

listKeys = oldListKeys;

Only in js: vapi-background.js

Only in js: vapi-client.js

Only in js: vapi-common.js

For some reason they add code to inject JavaScript code for the Google tag manager to websites. I am not sure if this is an intentional or accidental change. Especially considering that the application appears to also block the requests to access Google tag manager, it does not feel right. Unfortunately there does not seem to be an issue tracker to report this.

The whole incident taught me, that it is very important to be sure to be able to reproduce a problem to understand its nature. Usually also a minimal working example is a good idea. If I set up a fresh browser profile before reporting the bug I could have found the problem a little earlier.

Do you know this? There is an old system you set-up ages ago without using a configuration management tool like ansible and you did not setup etc-keeper right away. And now it is time to migrate the configuration from one Fedora or CentOS version to a newer one. It is a mess to figure out what did you change on the system compared to the distribution packages and it is not much fun to migrate these changes to a new system. Wouldn’t it be nice to be able to get a diff of your local system compared to a clean installation?

Do you know this? There is an old system you set-up ages ago without using a configuration management tool like ansible and you did not setup etc-keeper right away. And now it is time to migrate the configuration from one Fedora or CentOS version to a newer one. It is a mess to figure out what did you change on the system compared to the distribution packages and it is not much fun to migrate these changes to a new system. Wouldn’t it be nice to be able to get a diff of your local system compared to a clean installation? A few days ago I

A few days ago I

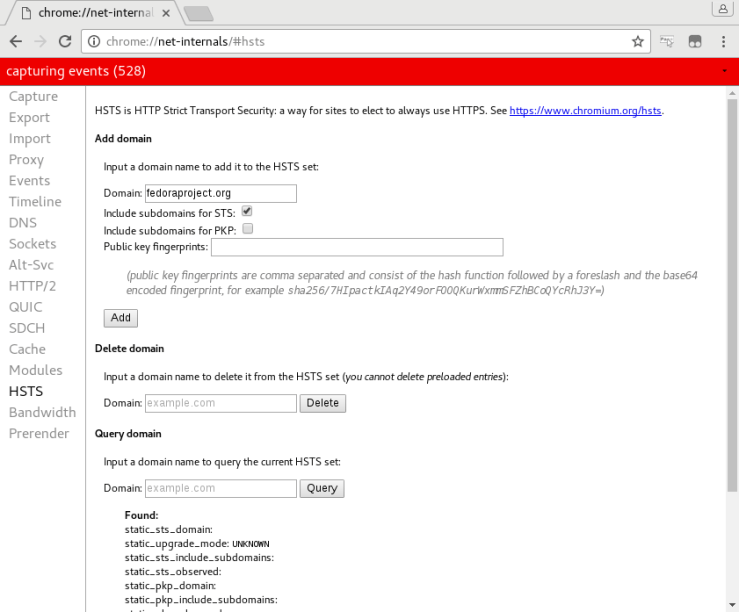

Afterwards you should notice that all requests to any fedoraproject.org URL should got to HTTPS by default. If you notice any problems with yes, please not this in the

Afterwards you should notice that all requests to any fedoraproject.org URL should got to HTTPS by default. If you notice any problems with yes, please not this in the

Spring arrived and doing spring-cleaning I thought about all the cleanup tasks in Fedora. If you are interested in doing some Fedora spring-cleaning, I will show you some opportunities to get your hand’s dirty and Fedora cleaner.

Spring arrived and doing spring-cleaning I thought about all the cleanup tasks in Fedora. If you are interested in doing some Fedora spring-cleaning, I will show you some opportunities to get your hand’s dirty and Fedora cleaner.